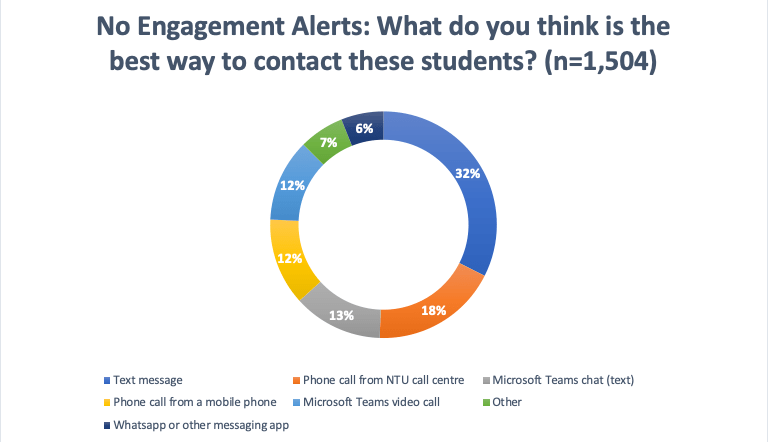

In the last piece I explained how in our most recent Student Transition Survey (Feb-Mar 2021), we asked students how they would like to be contacted if our learning analytics platform had raised an alert. When presented with a single choice, 32% wanted to be contacted initially by text. We will build this in to the next step of our call centre work and hope to run an AB trial in Autumn 2021 to see if we can drive up the response rate to our calls.

The 7% of students who chose ‘other’ almost all asked for us to use email, although one suggested “Contact each student independently to find out what suits them. This could be effectively carried out during the onboarding process”. We do already use email as part of the process and that will remain the case going forward. However, are looking at data that suggests that email is ineffective at changing student behaviour. In the last post, I argued that students like email because they can control how and when they respond to it. I’ve just looked at the text included in the ‘other’ options. Student views ranged from the merciless (“email is fine, they should be checking it if they’re not that’s their fault”) to the heartfelt (“Personally, if I do disengage from my course, it might be due to my mental health. By getting a call directly may cause more anxiety than I can cope even though there are good intentions with it. Hopefully, an email will be great as it may allow some time for me to react and reply.”). There’s a horrible tension here. If we intervene slowly and cautiously, we may end up only properly supporting students when it’s too late to help them.

Following the post, I was asked on LinkedIn if the responses varied by group. Effectively, did Overseas students have a different preference to young UK students? Well, sort of.

TLDR

Use texts.

Students aren’t in a place where they want us to contact them through their messaging apps.

Yet.

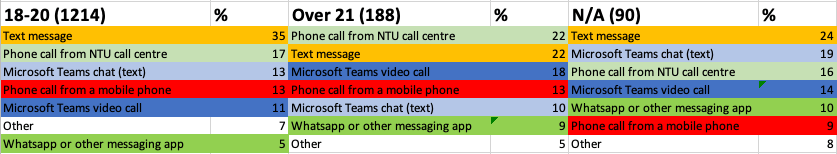

The following data is from our 2021 Student Transition Survey. It was answered online by 1,504 first year students from a population of just over 10,000 first years. Students were offered an incentive to complete the survey: they were entered into a prize draw. I’ve said previously that the students who complete the survey appear to be more engaged than average, so please bear that in mind. Please also note that the respondents were disproportionately female compared to the population and disproportionately from psychology courses (I think that they’re earning good karma for all the surveys they’re going to inflict upon the world). Respondents were also mostly white, and mostly from the UK, so the group sizes will be very different. Finally, this survey is intended to be used by the institution, this gives us data that we feed directly into our working practices. We wanted usable answers to help us plan our call centre work. With hindsight, perhaps we ought to have used a question more like the one from the 2019 survey, but we were conscious that we were already asking a lot of questions and wanted usable answers. I’ve kept in the responses for students who defined at least one aspect of their background as ‘N/A or Unknown’. They often provided quite different answers to their peers. I’m not at all clear if these are students who objected to a lack of option around how they defined their gender/ ethnicity etc., or are students who raced through and couldn’t be bothered to complete the details. It’s perhaps safest to think ‘hmm interesting’ rather than start on a whole new voyage of discovery.

The full question asked was:

The Student Dashboard lets NTU know when a student appears to have disengaged from their course for 10 or more days. After 10 days, we will email students, and then a team will reach out to offer help and support. What do you think is the best way to contact these students?

Differences by group

This was meant to be a quick piece of work. Inevitably I tried laying the differences out using whatever charts Excel has to offer, but the easiest view appeared to be to simply colour and line up the cells. If you find the charts unreadable, please drop me a line.

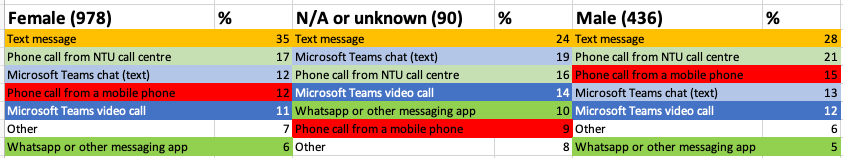

Female / Male

Seriously, use text. There’s not much difference between male and female students. Female students look more at ease with text than male, but operationally, text appears to be the place to start.

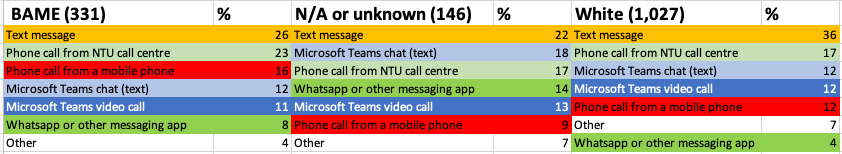

BAME/ White

The University uses a selection of different categories for students to identify themselves. Most of these groups are quite small. Therefore, I’ve aribtrarily grouped them into ‘BAME’, ‘unknown’ or ‘white’. Operationally, it makes sense to start with text messages, but it’s interesting note that BAME students appear more comfortable to just be rung by the call centre without an intermediary text step than their White peers. At the time of writing we haven’t analysed differences in response rate by ethnicity (sorry). BAME students also appeared the option of being rung from a mobile number.

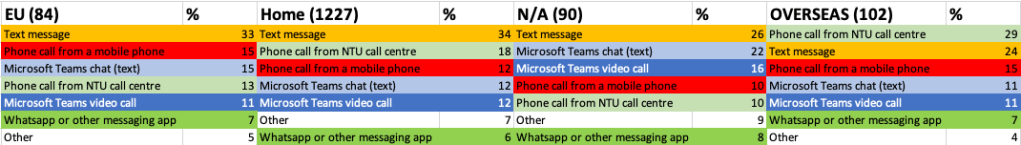

Nationality

For the first time we see something other than text as the preferred option. Overseas students appeared to prefer to receive a call directly rather than a text. It’s also quite interesting to see that EU students appeared less interested in a call from the call centre.

Age

In the UK, students are considered ‘mature’ if they start their studies after the age of 21. Marginally more of this group wanted to receive a call from the call centre without the intermediary text. They were also more likely to favour verbal communication compared to their younger peers.

Conclusion

There’s a lot of analysis here to say if you’re going to ring students ‘start with a text message’. It’s interesting how students have chosen text. Text feels slightly old fashioned, and a slightly less good form of communication compared to Whatsapp or other more modern messaging services. It is however pretty universal and perhaps, most importantly, doesn’t intrude into spaces that students perceive as their private area.

Find out more about our findings at our free end of project event

Wednesday 14th July

Very interesting Ed, thanks for sharing

LikeLike