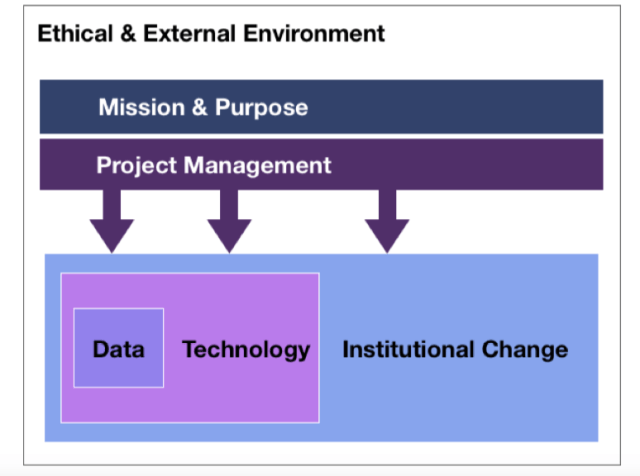

Between 2015 & 2018, we worked alongside some brilliant colleagues in KU Leuven and Leiden University on the ABLE Erasmus+ Project. I think that we spent longer on learning analytics infrastructure and operations than we would have liked. However, doing so gave us really interesting insights into the challenges of implementing learning analytics. By the end of the project, we felt that there were six fundamental pillars any institution needs to get right in order to successfully implement learning analytics.

Enabling factors

-

Ethical and external environment

It is essential to meet not just the legislative requirements, but the broader ethical considerations too. Even on a continent like Europe with shared privacy legislation, there are interesting differences in cultural norms between countries.

-

Mission and purpose

At the highest level, what is the purpose of learning analytics and what does that mean in practice? These issues need clearly articulating and sharing amongst the key stakeholders in an institution. I’d argue that institutional change requires a senior sponsor who can help integrate the work into organisational structures and help unblock the inevitable problems when they arise.

-

Project management

Our colleagues at KU Leuven were able to develop a learning analytics platform using relatively limited resources and with sheer hard work, but I think that’s relatively unusual. Learning analytics is complicated and requires really deep integration into institutional systems and processes. It requires effective and properly resourced project management. Not just for the pilot and initial implementation, but also to help improve the almost inevitable problems that will be uncovered with the institution’s underlying data.

Operational factors

-

Data

I think I’ve written about this elsewhere, but consistent, reliable data is clearly essential. And I’d argue is much harder to achieve than we would hope (see project management).

-

Technology

I strongly believe that learning analytics is a technology-enabled process. It’s not a technology project, but it can’t happen without processing power and interconnected computers. It needs software, algorithms, ways to present data in a coherent, actionable manner.

-

Institutional change

Everything else is irrelevant. Unless there’s someone in place to use the resources, it’s a waste of money. I’d argue that this is the most important and most difficult part of the project to get right. At the time of writing we have approximately 1,500 staff users and 30,000 student users. They all need information to help them interpret the data meaningfully and improve student engagement and success.

A more detailed description of the model with recommendations for senior managers, external technology providers, internal IT departments, staff users and students is available in the ABLE Project website.