I’m not apocalyptic about the role of big data in society generally and education specifically, but I do feel strongly that we all have a responsibility to stand back and think about how we accept and use technology.

The brilliant writer Yuval Noah Harari has written a great piece on the philosophical challenges of what he terms ‘Dataism’ and the implications of putting our faith in big data to know us better than we know ourselves.

Check your photo – inbuilt bias?

The technology writer Cat Hallam, wrote this interesting piece in twitter about the problems she faced as the Gov.uk passport website facial recognition software appeared have problems with her photograph. In the ensuing twitter discussion, she acknowledges that the quality of the picture may be the issue, but it’s hard to avoid the suspicion that there’s a broader problem that the software may just be struggling to cope with her skin colour. It seems the practical embodiment of the anxieties raised by algorithms of oppression and weapons of math destruction.

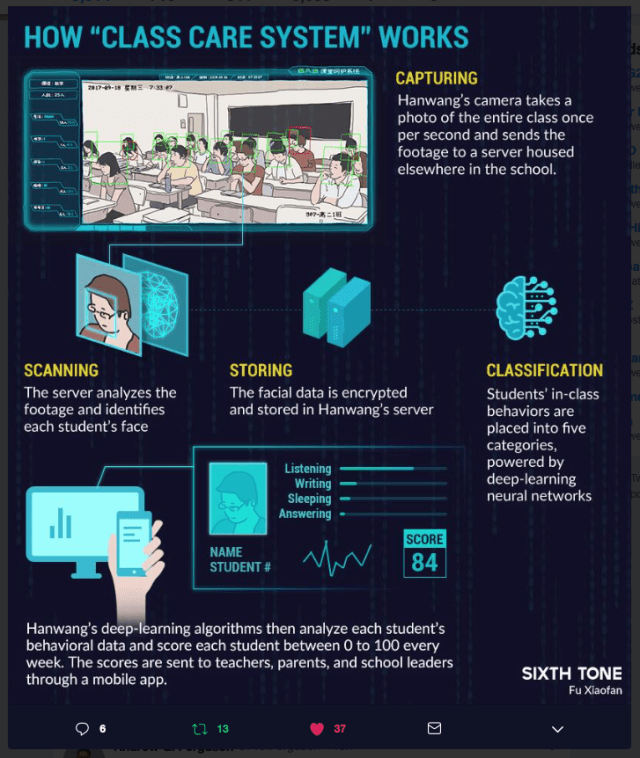

Class Care System

My children’s secondary school has a behaviour system that sends me updates about their (mostly brilliant) behaviour. The data isn’t terribly useful and I have grave concerns about their privacy. It is of course possible to argue that the system is being designed to enable me to parent better and to be involved in their education, but there are ethical issues.

However Class Care appears to be the same idea dreamt up in a totalitarian state with scant little regard for privacy.