How ideological are the algorithms used in learning analytics?

At the core of any learning analytics process is the algorithm. Algorithms are just computational lines of code, so they’re neutral, objective, unproblematic. Of course we have to take into account the legal GDPR requirements for privacy and destroying old data etc., but surely we don’t need to worry about the nature of the algorithms that drive learning analytics.

Do we?

The internet was meant to be a great liberating force. All human knowledge available at the click of a mouse or increasingly through your phone. And I sincerely believe that the people who build search algorithms believe that too. The problem is, however, that a spirit of enlightenment doesn’t pay the bills. The business decisions needed to generate a profit aren’t necessarily the same as those needed to create an informed, educated society.

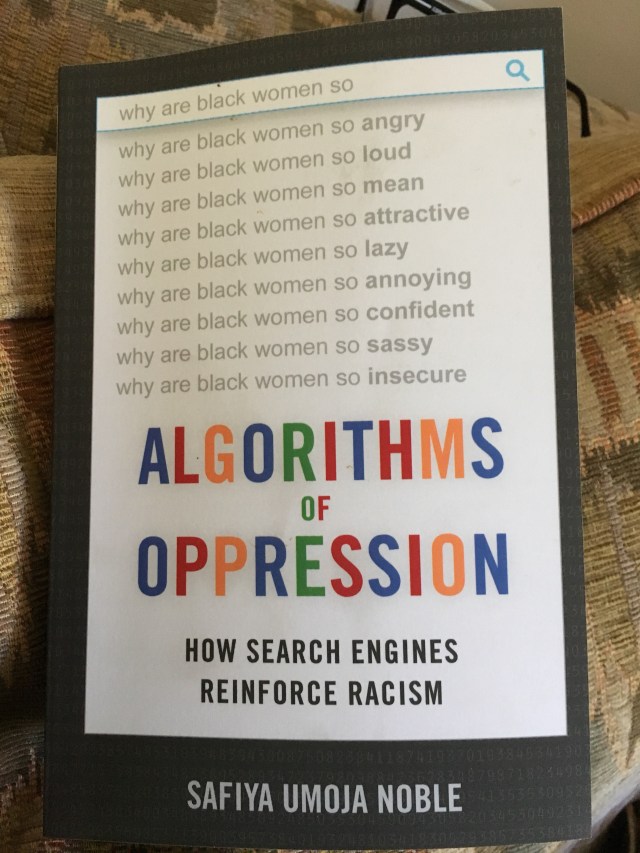

Safiya Umoja Noble brings this tension into sharp focus through the lens of racism in search. Her book has a number of important threads: the under-representation of women and ethnic minorities in Silicon Valley, the right to be forgotten, and underlying bias in western approaches to cataloguing knowledge.

However, the core of Noble’s work began in 2011 after the realisation that typing ‘Black Girls’ into Google Search brought up a lot of pornography (she acknowledges that this is now less likely). More recently she finds examples of very unsubtle racism, sexism in image searches, and racist conspiracy websites appearing high in Google searches.

But search engines just reflect what the users are into, right?

If there are lots of people looking for pornography featuring Black women surely that’s just evidence of Google providing a service.

Perhaps.

But Google and other search engines don’t passively reflect interests of their users. They reflect the interests of their customers: advertisers and people prepared to pay to get to page 1 on a search.

We forget this. Search engines look and feel like they’re libraries, temples of knowledge and wisdom. But they’re not. They’re market places for organisations and individuals with the deepest pockets. There is a huge amount of editorial control that takes place every day on search engines, but it’s not based on balance, ethics, needs of civic society, it’s based on money. Furthermore, the people with money tend to be overwhelmingly from one group in society: White, Male, well-educated and (dare I suggest) entitled. This doesn’t necessarily make search a bad thing, but it makes it biased towards winners, towards the people already at the top of society, the economic and cultural elites.

And as Noble points out, Google is a monopoly. “Googling” means searching on the internet. Google provides genuinely useful ‘free’ services and has PR to die for. However, it’s not a charity or government department with a public service mandate. This means that we increasingly rely on one single source of data that is framed by a very specific business model and a variety of biases, both conscious and unconscious.

Zeynep Tufecki has noticed a separate, but related, strand in her work on YouTube. Humans get bored quickly; the best way to keep people looking at videos (and therefore watching adverts) is to show more and more extreme examples. Tufecki jokes that if you start looking up videos on vegetarian recipes, YouTube will up the ante until you find yourself watching videos on becoming a vegan. Which is funny until you start to look at the problem through a more sensitive lens such as race, gender or politics. Moreover, Tufecki questions whether the algorithm does this because a human being designed it this way, or through machine learning. If the latter, then Youtube is potentially driving division in society, to generate advertising revenue.

Unintentionally.

So what’s the connection to learning analytics?

The algorithm at the heart of any learning analytics system is not neutral. There will be an ideology driving its development. I’ve previously commented on how we deliberately excluded race and other demographics from our algorithm, but that doesn’t mean that the Dashboard doesn’t have an ideological stance.

It does.

The tool is incredibly individualistic, arguably neo-liberal in outlook. We look at how engaged individual students are and we give them a ranking. We did this with our eyes wide open, seeking to avoid forms of bias, but there’s a strong underlying ideology underpinning our work.

I’d argue that this is an ethical and efficient way of targeting support at the students who need it the most. However, we have based our model only on the relationship between individual student effort and academic success. We don’t take into account problems in the provision of education to students: factors such as the quality of teaching, problems with administration, lack of library resources at pressured times or the campus estate. Operationalising those other factors is difficult, perhaps impossible, but we have made decisions that have ideological undertones.

Ultimately, we worked with our technology partners, Solutionpath, to build a tool to identify students who most needed support. However, by doing so, we created an environment in which the ‘problem‘ sits with the student. We have designed a system to fix the student, not necessarily wider underpinning problems in higher education. We will always need to be conscious of this bias and ensure that staff development reminds colleagues of this bias.

I wonder what biases/ assumptions/ ideologies sit at the heart of your learning analytics resource.

Pingback: Big data/AI: examples of concerning practice unintended consequences – Living Learning Analytics Blog

Pingback: The effectiveness of learning analytics for identifying at-risk students in higher education – an extra titbit – Living Learning Analytics Blog

Pingback: Slides from my keynote for the Kompetansenettverk for Studenters Suksess I Høyere Utdanning – 7th December 2021 – Living Learning Analytics Blog